AI and Accounting Combined

Artificial intelligence is transforming the accounting landscape. Work processes are being redefined, priorities are being adjusted, and time is being redirected. With Shewing The Fly ’s AI-enhanced platform, accounting and audit teams experience significant time savings, remarkable growth potential, and impeccable financial management. However, even greater advancements are on the horizon.

The Shewing The Fly Platform

Maximizing Current Impact, Evolving for the Future

Leverage Shewing The Fly’s sophisticated data features and GenAI to automate workflows for tasks such as lease accounting, audits, and revenue recognition all within a unified platform. Streamline intricate activities, improve precision, and effortlessly adhere to regulatory requirements.

Automated Workflows: More Time for Important Matters

UNDER THE HOOD: Discover Our Data Capabilities

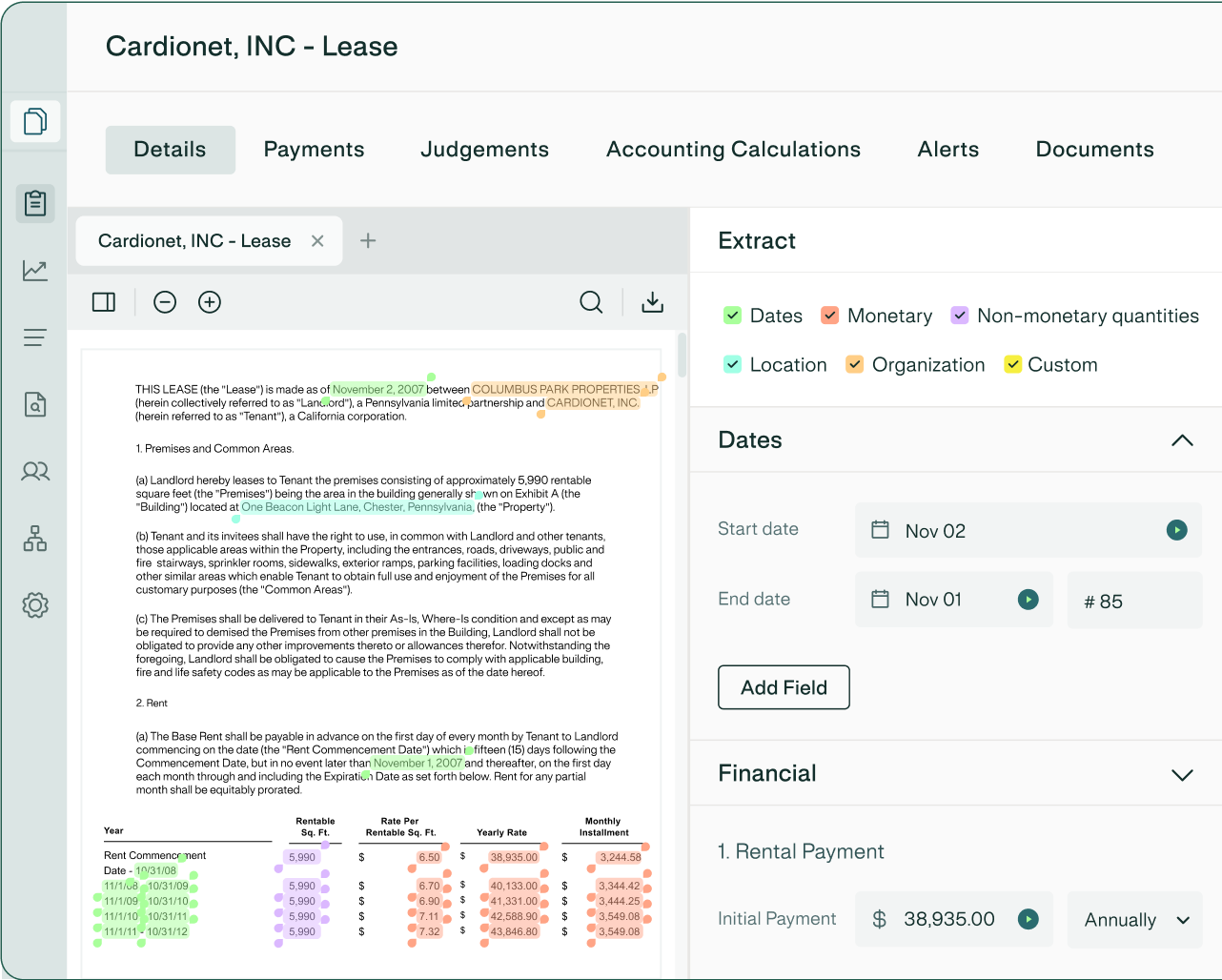

Data Extraction

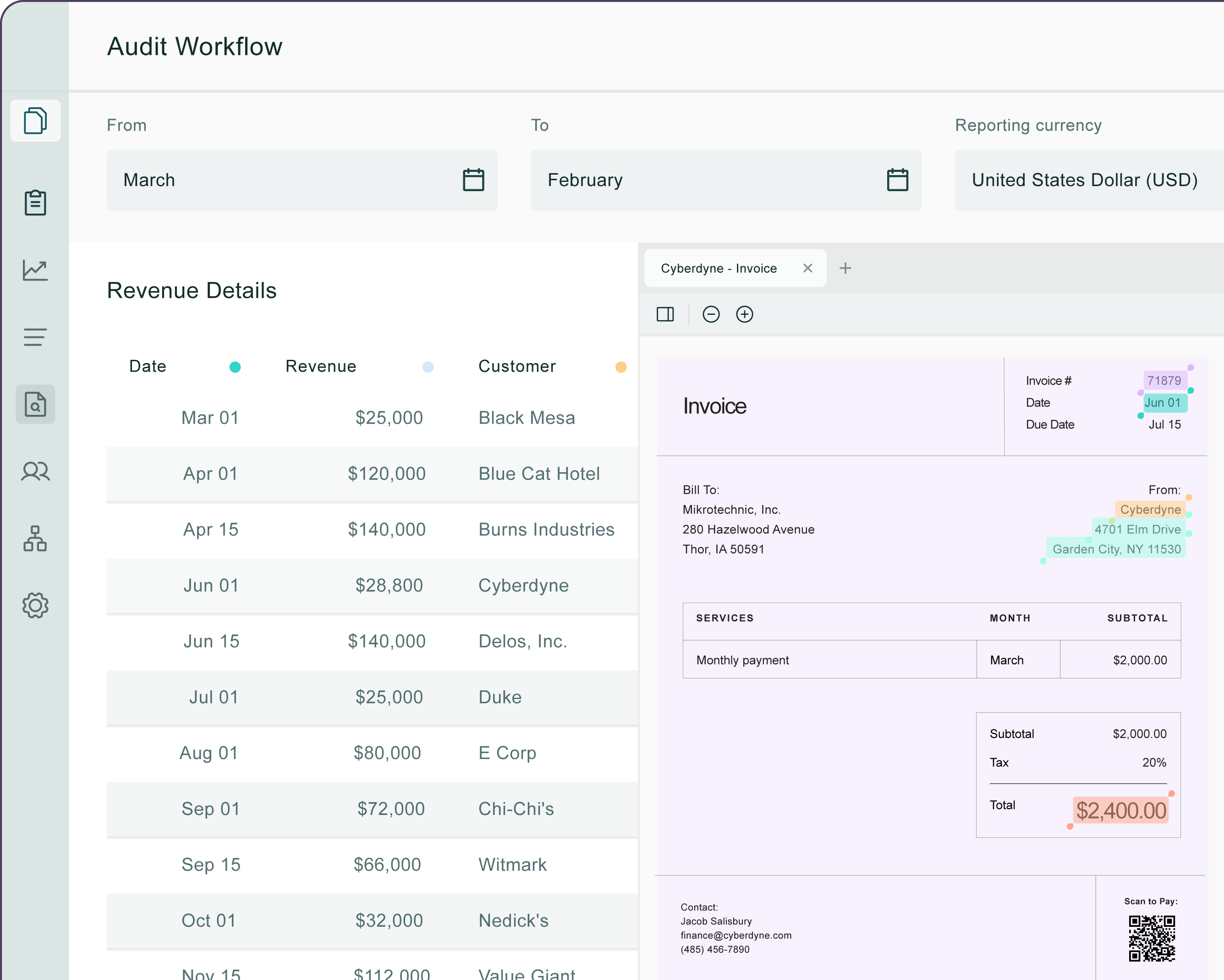

Data Validation

Data Reconciliation

GenAI: Driving Our Innovation

Revolutionizing Accounting Practices

Shewing The Fly’s platform uniquely employs GenAI for strategic accounting decisions including:

- Examining uploaded financial documents to deliver customized interpretations of IFRS policies.

- Responding to complex financial inquiries, such as locating and assessing embedded leases of specific value.

- Guiding accounting leaders on the latest compliance requirements and financial standards.

Modern Accounting Workflows

It's the small details that

elevate your team’s performance

elevate your team’s performance

Shewing The Fly enhances accountants’ daily operations to be quicker, smoother, and more precise. Start the platform, review your dashboard, and get to work with complete confidence.

Begin with minimal to no configuration.

Link all of your financial and accounting systems.

Quickly extract data from contracts and systems.

View the most pertinent information at a glance.

Receive automated alerts when something requires your attention.

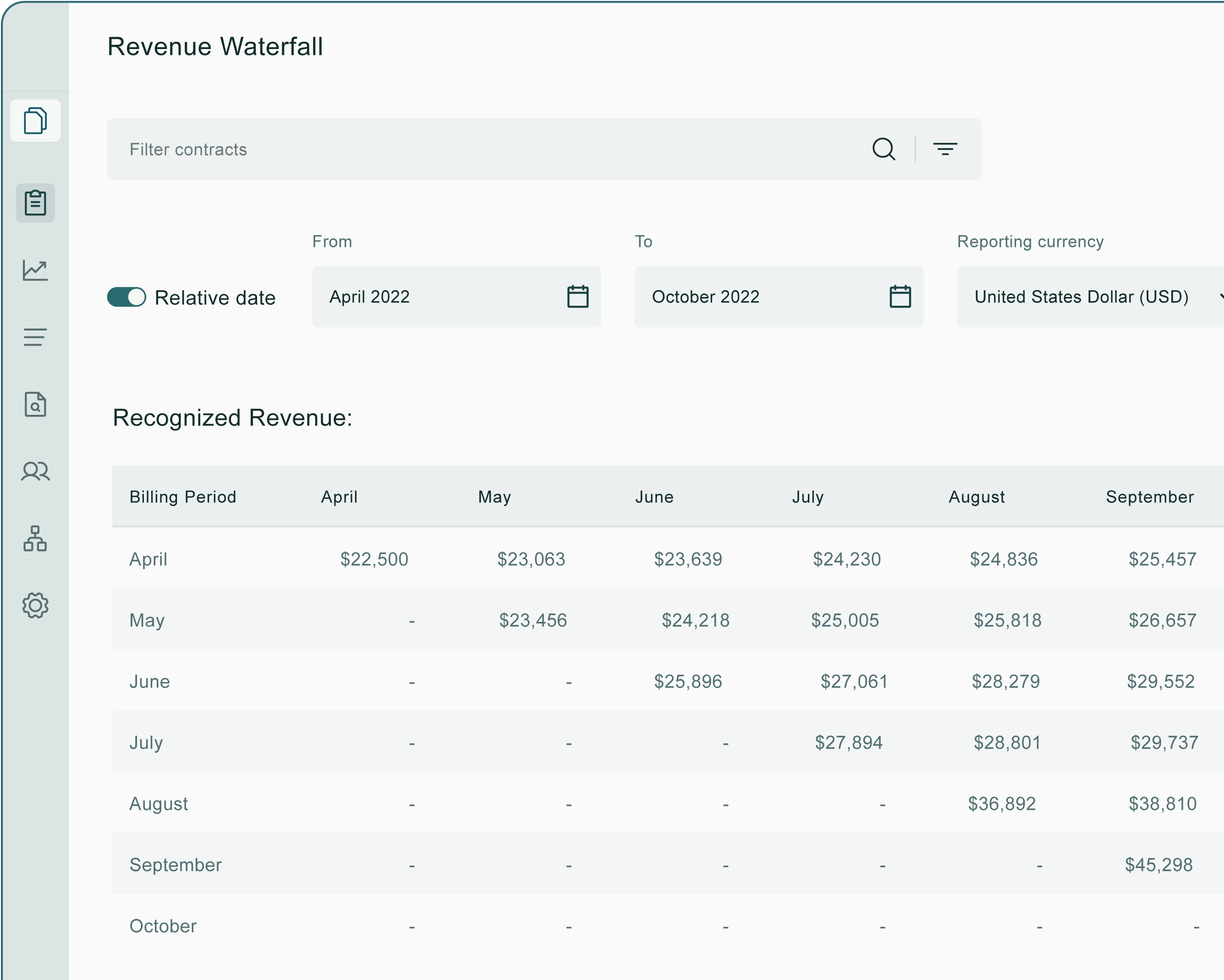

Report immediately to finance leadership and auditors.

AI-Enhanced Financial Precision

Here’s what AI looks like when it’s on your side

We integrate proprietary models, external data, and a clear vision for the future of accounting. The platform automatically checks your numbers against reporting and compliance standards, highlighting discrepancies and potential issues before they affect the business.

Impeccable Financial Oversight

Accurate and Compliant Reporting Workflows

Intelligent Reporting and Analytics

Enhance Reporting

What people are saying.

Integrations

Absolutely, Shewing The Fly supports that as well.

Link up with CRM, billing, and ERP data sources, and synchronize unstructured data like spreadsheets and PDF contracts—all within a single platform.